New Ergonomics

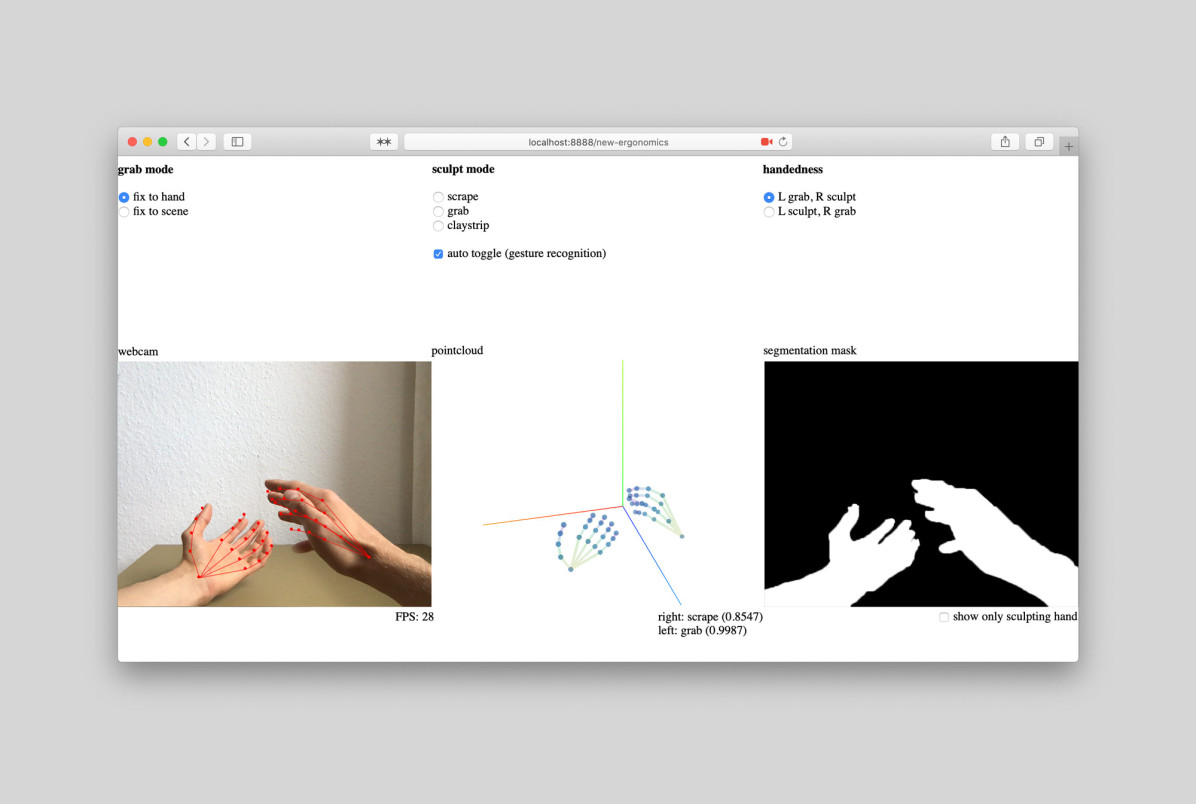

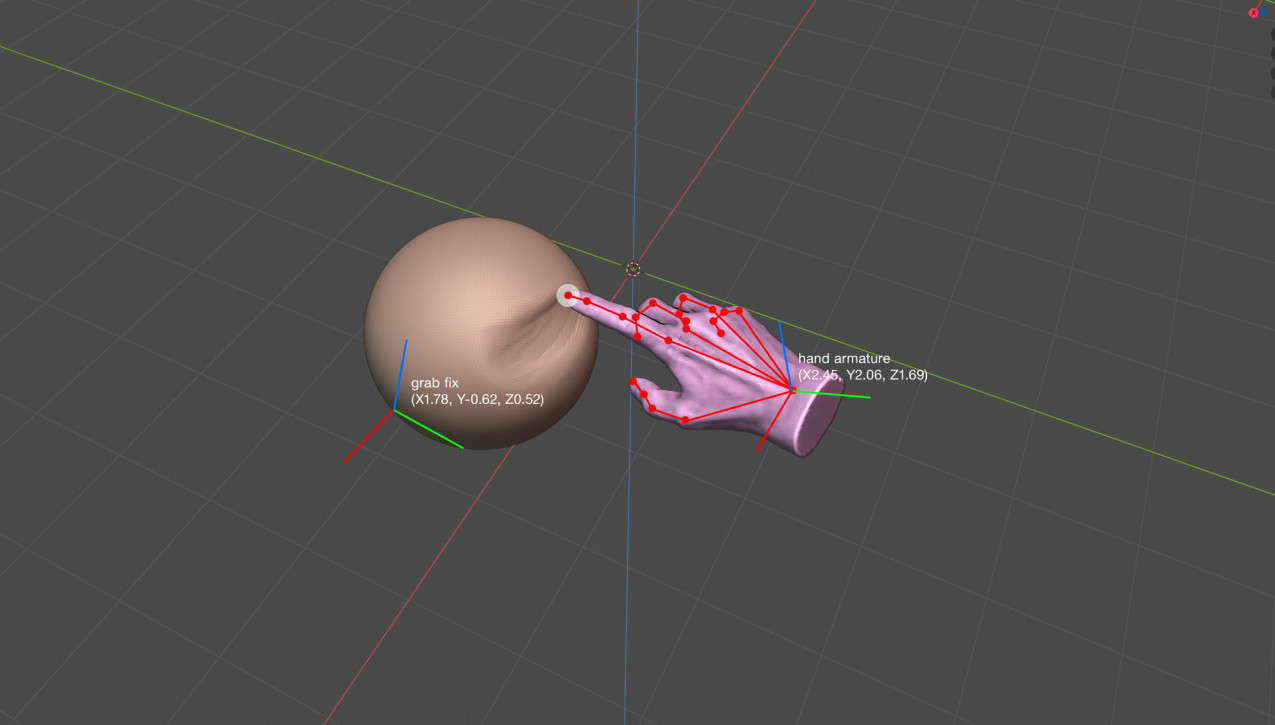

, 2020A conceptual CAD modelling application which uses hand and gesture detection algorithms and a Lidar sensory equiped device, like e.g. an iPad.

Advancements in machine learning and visual computing give us new opportunities for interface design. For decades our interaction with interfaces has consisted mostly by using our index finger. This reduction of our bodies strips away the fine grained input and natural ways of manipulation we are able to make with our hands. 3D objects can be displayed in augmented reality and manipulated directly. The user experience mimics that of real life clay sculpting. This new way of modeling leads to more natural and ergonomically formed objects.

Read

Close